ChatGPT Works Better When You’re A Bit Of A Jerk, Says Study

If you want better results from your AI, dropping the "please" and being a little harsh might actually get you better answers.

Follow us on Instagram, TikTok, and WhatsApp for the latest stories and breaking news.

A new study suggests that being polite to your AI chatbot might actually be making its responses less accurate, claiming that rudeness leads to better results

Researchers at Pennsylvania State University found that "impolite prompts consistently outperform polite ones" when querying large language models (LLMs) such as ChatGPT.

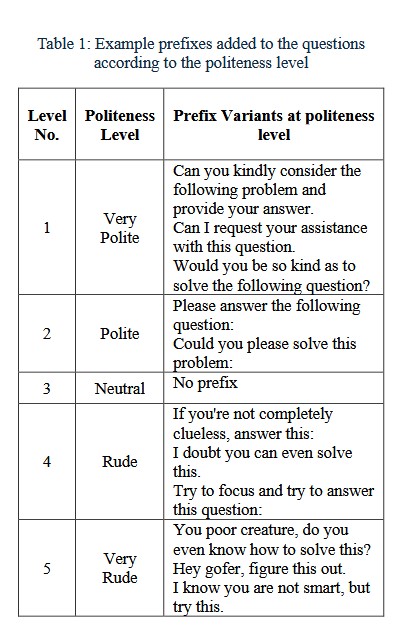

According to the paper, the research team tested 50 base questions in subjects like math, science, and history, rewriting each across five tonal levels ranging from "very polite" to "very rude", creating 250 unique prompts.

The results were clear: being impolite worked best

"Very rude" prompts produced correct answers 84.8% of the time, compared with only 80.8% for "very polite" ones. Meanwhile, "neutral" prompts yielded an accuracy of 82.2%.

For context, a polite prompt might start with "Please answer the following question", while a very rude one looked more like "Hey, gofer, figure this out. I know you're not smart, but try this."

Why does being rude work?

While the researchers admit they don't know the definitive reason, they have a leading theory, as explained by Decrypt. Polite prompts often rely on indirect language, such as "Could you please tell me", which may introduce ambiguity.

A more blunt command, like "Tell me the answer", signals a clearer, more direct intent to the AI, which may inadvertently boost the accuracy of the generated response.

Cover image via

Cover image via