Instagram To Warn Parents If Kids Search For Suicide-Related Terms, Says Meta

The warnings will come with resources to help parents support their children.

Cover image via Meta Newsroom

Cover image via Meta NewsroomFollow us on Instagram, TikTok, and WhatsApp for the latest stories and breaking news.

Instagram will begin notifying parents if their kids search for topics related to suicide or self-harm in the coming weeks

Meta announced the move yesterday, 26 February, as the latest protective measure for their specialised Teen Accounts feature, which contains restrictive measures to protect users under 18.

The new warning system will alert parents using Instagram's supervision safety feature if their child searches for terms relating to suicide or self-harm within a short period of time.

It is expected that the warning system will be first rolled out in the US, the UK, Canada and Australia next week, followed by other regions later in the year.

A similar parental warning system, designed for conversations with AI, is being built for use later in 2026.

The system is designed to ensure parents are aware if their children are repeatedly searching for this type of content

For example, if an underage user repeatedly searches terms like "suicide" or "self-harm", it would trigger a warning to the child's parent.

This warning will come in the form of a text, email, or WhatsApp message, as well as an in-app notification.

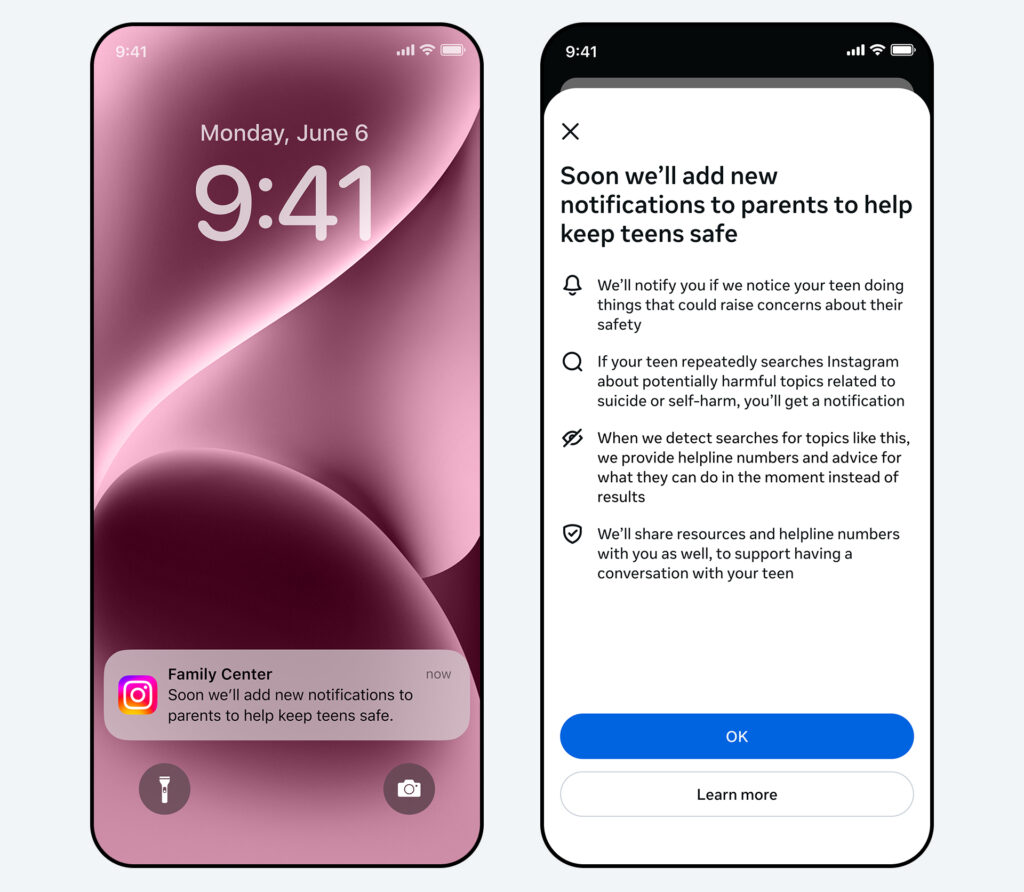

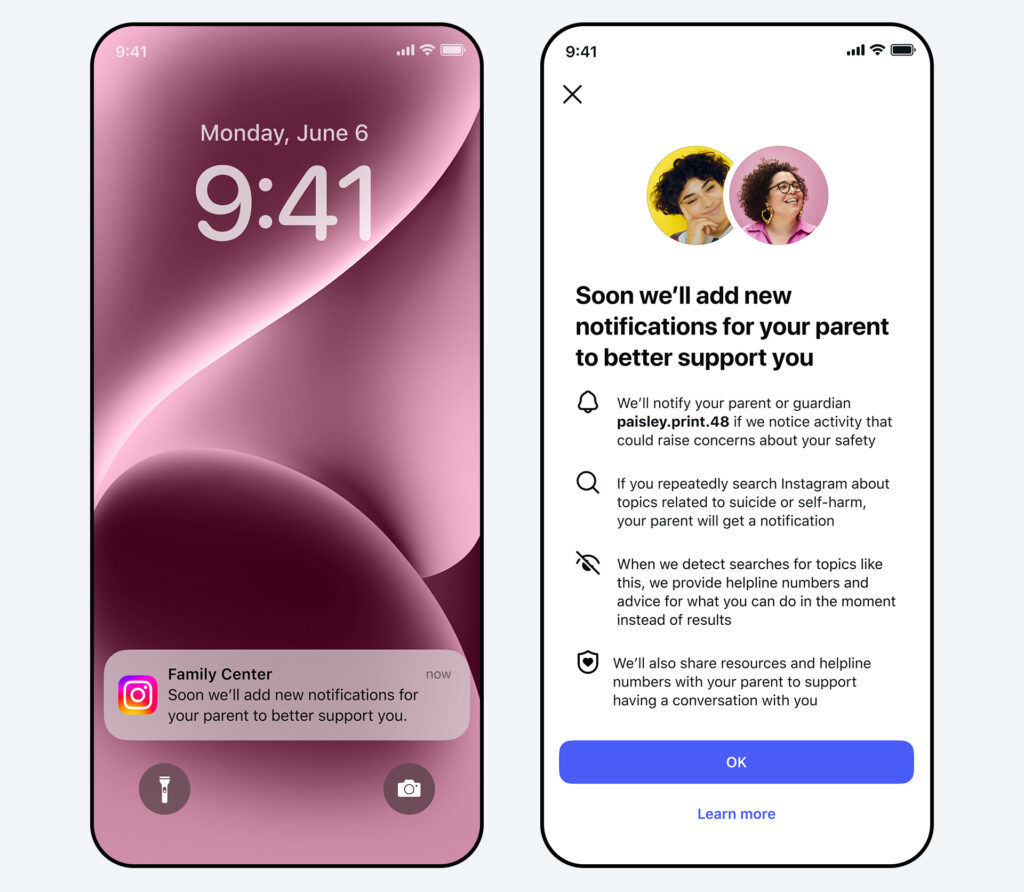

An example of what the warning will look like.

Image via Meta Newsroom

What appears when the warning is opened.

Image via Meta NewsroomThe parental alerts will also come with resources to help parents approach sensitive topics with their children.

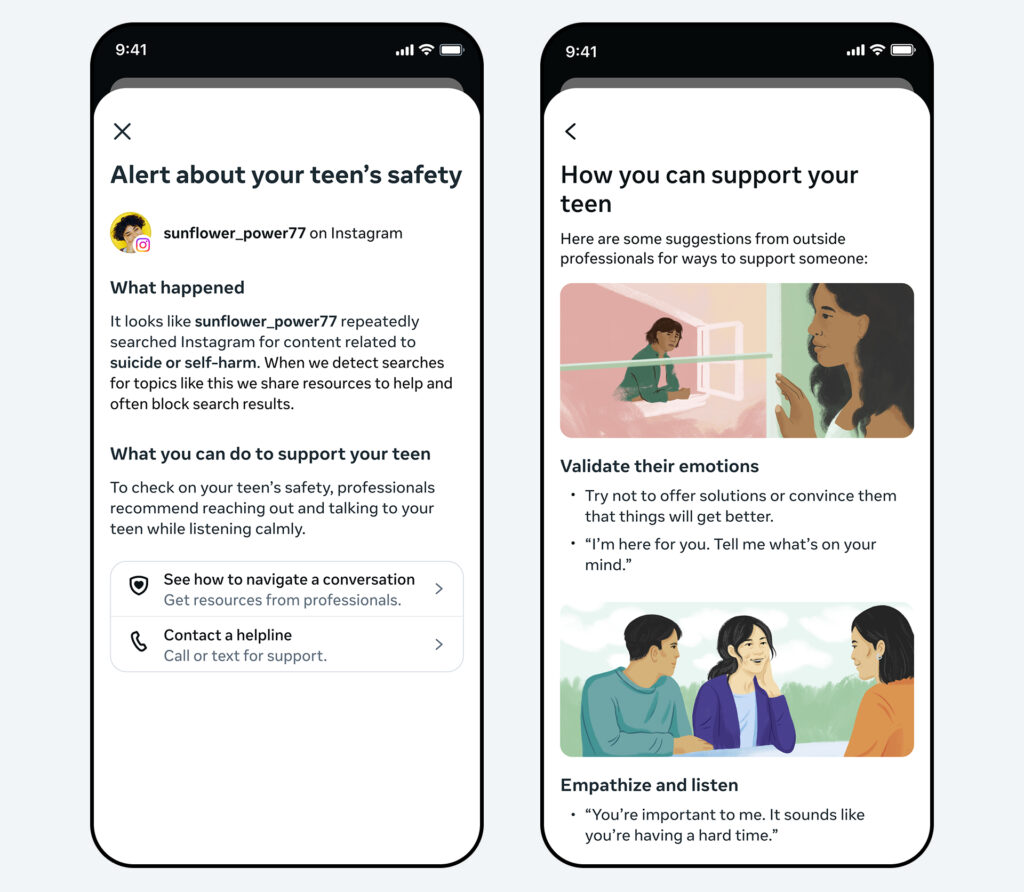

By default, Instagram blocks search results when searching for harmful content, directing the user to resources and helplines to offer support.

A warning will be sent to parents only after a threshold has been reached

This prevents the system from becoming less useful by avoiding too many unnecessary warnings.

Meta added that they consulted experts from their Suicide and Self-Harm Advisory Group and analysed Instagram search behaviour to set a threshold requiring searches within a certain time period.

This allows Instagram to err on the side of caution when alerting parents, though it may result in some false alerts where there is no cause for concern.

An example of a blocked search result.

Image via Meta NewsroomThe move comes amid increased global scrutiny over Meta's handling of underage users

Reuters reported on an ongoing suit in Los Angeles, California, over accusations that Meta and its platforms harmed children's mental health by causing addiction.

Meanwhile, Australia has banned the use of social media by users under 16, and a similar ban for underage users will come into effect in Malaysia this year.

Asking for help is not a sign of weakness.

If you or anyone you know is lonely, distressed, or having negative thoughts, please call these Malaysian hotlines:

1. BEFRIENDERS KL

24-hour

Contact: +603-76272929

Email: [email protected]

Website | Facebook | Twitter

2. TALIAN KASIH

24-hour

Contact: 15999

WhatsApp: +6019-2615999

Email: [email protected]

3. BUDDY BEAR CHILDLINE

Daily (12pm – 12am)

Contact: 1800-18-2327

Email: [email protected]y

Facebook | Instagram

For a more thorough directory of resources, head over to the websites of Malaysian Mental Health Association or MINDAKAMI.