5 Ways Scammers Are Using AI & How To Protect Yourself From Them

Online fraud has become faster, cheaper, and far more convincing than ever before.

Follow us on Instagram, TikTok, and WhatsApp for the latest stories and breaking news.

Artificial intelligence can make your workflow more efficient and help with menial tasks, but it has also become a powerful tool for scammers

Online fraud has become faster, cheaper, and far more convincing than ever before.

Here's how scammers are using AI today and what you can actually do to protect yourself.

1. AI-written phishing scams

Scam messages and emails used to be easy to spot. We knew to look out for broken English, weird grammar, and awkward phrasing.

AI has largely changed that. Today, "jailbroken" LLMs (like FraudGPT or WormGPT) allow scammers to generate perfect, culturally nuanced prose in any language.

In 2025, a Malaysian woman fell victim to scam emails that claimed to be from her boss, asking for financial help in the form of Razer Gold vouchers. She ended up losing RM5,000.

How to protect yourself:

- Never click links directly from emails, SMSes, or WhatsApp messages from unknown numbers. Open a new tab and log in to the service yourself.

- Check the sender address carefully, not just the display name.

If the message is from your bank:

- Call your bank directly using the official number on the back of your physical card or their verified website.

- Never use the phone number provided in the suspicious SMS or email.

- Log in manually through the bank's official app or by typing the URL directly into your browser. Do not click any links to "verify your account".

If the message is from a family member or friend:

- Call them on their "old" number. If they claim they have a new number because their phone is "broken" or "lost", try calling their original number first. If they answer, you've caught the scam.

- Ask something that only the real person would know and that isn't on social media.

- Check their language. Is your sibling or friend who usually calls you by a nickname suddenly using your full name? If the tone feels "off", it probably is.

If the message is from a colleague or your boss:

- Verify via a different channel. If they messaged you on WhatsApp, send them a quick ping on Slack, Microsoft Teams, or your official work email to confirm the request.

- Check the "Urgency" vs. "Policy." Most companies have strict policies against HR or CEOs asking for gift cards, bank transfers, or "urgent" password resets via text. If the request bypasses standard procedure, it's likely a scam.

- Look at the sender's handle. Scammers often spoof names. Check the actual email address or phone number, not just the "Display Name" that pops up on your screen.

2. AI voice cloning

AI can now clone a person's voice using just a few seconds of audio. This can include from social media platforms such as TikTok, Instagram, YouTube, or WhatsApp voice notes. Scammers use this to impersonate family members in distress.

In August 2024, The Star reported on three deepfake phone scams. One of which included a 63-year-old man in Terengganu who was deceived by an AI voice mimicking a friend's request for help. The man transferred RM49,800 to a fraudulent account.

Image used for illustration purposes only.

Image via CanvaHow to protect yourself:

- Set a family safe word for emergencies.

- For work: never approve payments based on voice notes alone.

- Call back using a known number, not the one that contacted you.

3. Deepfake videos & fake public figures

AI-generated videos can now convincingly mimic real people, including politicians and celebrities. These are especially dangerous on social media platforms such as Facebook, where context is thin and videos move fast.

Multiple public figures in Malaysia, including celebrities, politicians, and members of the royal family, have been hit with AI deepfake videos and images of themselves promoting products and services, as well as asking for donations from the public.

In mid-2025, AI-generated videos of Neelofa and Aznil Nawawi surfaced showing them promoting online gambling apps.

Many celebrities, including Neelofa, have had their images and identities misused by AI-driven scams to deceive the public.

Image via Moma WaterHow to protect yourself:

- Be skeptical of investment opportunities shared via social media ads, even if a "celebrity" is endorsing it.

- Cross-check verified sources by visiting the public figure's official social media profiles to confirm if the video or announcement is authentic.

- Verify government updates and national news by visiting official portals or established news websites directly.

4. AI-powered romance scams

Romance scams aren't new, but AI allows one scammer to manage hundreds of victims simultaneously with "perfect" emotional consistency.

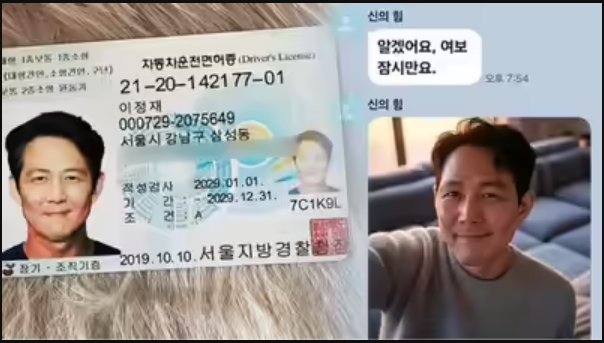

In October 2025, a woman in her 50s in South Korea lost 5 billion KRW (RM13.4 million) after being targeted in an elaborate romance scam where criminals posed as actor Lee Jung-jae using AI-generated selfies and forged ID cards to gain her trust and simulate a romantic relationship.

Popular 'Squid Games' actor Lee Jung-jae's fake ID and AI photo.

Image via India TimesHow to protect yourself:

- Be cautious of relationships that move too fast.

- Reverse-image search profile photos.

- Do not trust "celebrities" or any public figure reaching out and asking for money.

- Never send money to someone you haven't met, no matter the story.

5. AI-generated fake websites & ads

AI can now generate entire websites in minutes, and scammers are exploiting this to clone everything from Shopee and major banks to seemingly authentic small businesses.

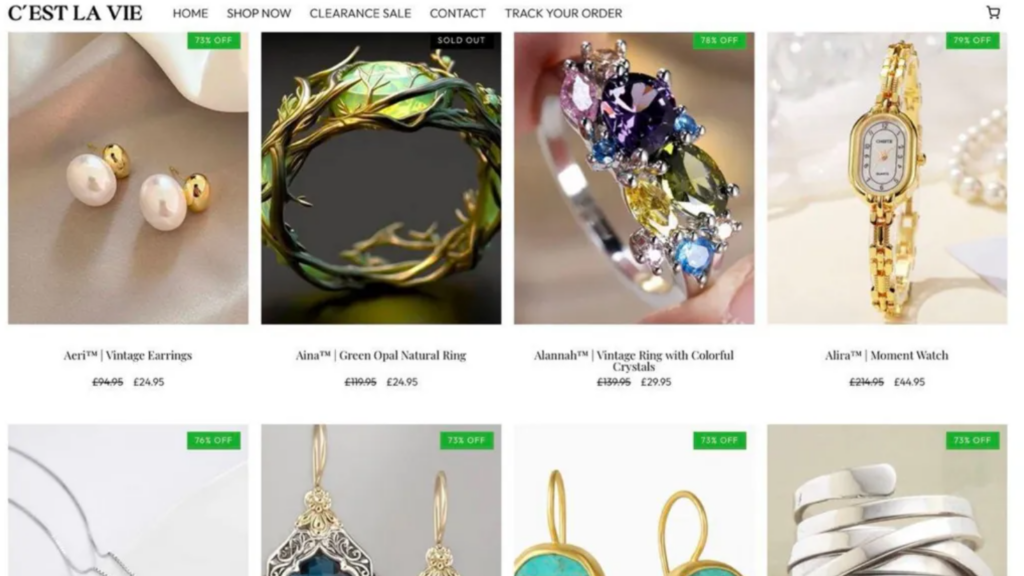

One example is the C'est La Vie scam, where fraudsters used AI-generated images and a fabricated backstory to pose as a long-running, family-run jewellery boutique in Birmingham, complete with a grieving widow narrative and an 80%-off "closing down" sale.

Shoppers lured in by polished visuals and emotional storytelling later discovered the business did not exist, and received cheap, mass-produced items shipped from Asia instead of handmade jewellery.

The images of boutique jewellery store founders "Patrick and Eileen" were AI-generated.

Image via BBC

All the listed products on the website claimed huge discounts, but the shipped products looked nothing like the images.

Image via BBCHow to protect yourself:

- Look for a physical address, phone number, and refund policy.

- Be wary of deals that are dramatically cheaper than everywhere else.

- Check for detailed online reviews that go beyond generic descriptions.

REAL ke AI? Fact-check before you act. Everyone plays a role.